Research

Gesture Understanding

Motion Capture

In order to understand human action, we must observe human motion. We aim at realizing marker-less and real-time motion capture system. The eight cameras are arranged in a circular shape centered on the subject and the human posture is estimated by analyzing multi-viewpoint videos. For constructing real-time computer vision systems with multiple viewpoints, we have proposed RPV which is a parallel programming environment on a PC cluster.

International Conferences (Peer-reviewed)

- Takashi Saiki, Atsushi Shimada, Daisaku Arita, Rin-ichiro Taniguchi

A Vision-based Real-time Motion Capture System using Fast Model Fitting

CD-ROM Proc. of the 14th Korea-Japan Joint Workshop on Frontiers of Computer Vision, 2008.01

BibTeX - Naoto Date, Hiromasa Yoshimoto, Daisaku Arita, Rin-ichiro Taniguchi

Real-time Human Motion Sensing based on Vision-based Inverse Kinematics for Interactive Applications

Proc. of International Conference on Pattern Recognition, Vol.3, pp.318-321, 2004.08

BibTeX

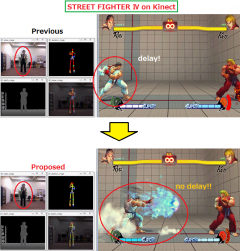

Early Recognition of Gestures

We are researching about early recognition of gestures patterns. In general recognition, we face to the problem of delay of interaction between human and machines. In our proposed method, we realize the system which conducts early recognition by outputting the recognition result if the postures which have high uniqueness to gestures and can solve the delay of interaction between human and machines.

Moreover, when early recognition is conducted, we need to access to training data and do matching between input postures. We propose the recognition method whose calculation cost is not depend on the size of learning data by using hash search not by fine-tooth-comb search in this matching.

Moreover, when early recognition is conducted, we need to access to training data and do matching between input postures. We propose the recognition method whose calculation cost is not depend on the size of learning data by using hash search not by fine-tooth-comb search in this matching.

International Conferences (Peer-reviewed)

- Manabu Kawashima, Atsushi Shimada, Hajime Nagahara, Rin-ichiro Taniguchi

Adaptive template method for early recognition of gestures

CD-ROM Proceedings of 17th Japan-Korea Joint Workshop on Frontiers of Computer Vision, 2011.02

BibTeX - Atsushi Shimada, Rin-ichiro Taniguchi

Improvement of Early Recognition of Gesture Patterns based on Self-Organizing Map

CD-ROM Proc. of the 11th International Symposium on Artificial Life and Robotics, 2011.01

BibTeX

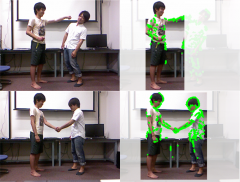

Complex Human Action Recognition

The recognition of complex human actions performed by two persons has being studied, named as human-human interaction recognition.

Human-human interaction recognition is a challenging research area since we should consider about the relationship of two participants and various complex dynamic actions.

In our research, we estimate the action contribution of participants to determine which person’s action is the major action for correctly recognizing interactions.

Human-human interaction recognition is a challenging research area since we should consider about the relationship of two participants and various complex dynamic actions.

In our research, we estimate the action contribution of participants to determine which person’s action is the major action for correctly recognizing interactions.

International Conferences (Peer-reviewed)

- Atsushi Shimada, Manabu Kawashima, Rin-ichiro Taniguchi

Early Recognition based on Co-occurrence of Gesture Patterns

Proceedings of the 17th international conference on Neural information processing, pp.431-438, 2010.11

BibTeX

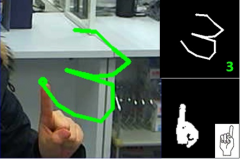

Hand shap and Hand Motion Recognition

To realize various operations intuitively on TV and Other relative machines, we perform the research on interface using human gestures.

In the previous researches, only one kind of information of hand shapes or hand motion is used. However, quantity of hand shapes and complex hand motion become necessary to realize lots of operations.

In our research, we combine simple hand shapes and hand motion to obtain various hand gestures instead of using only one kind of information. With these gestures, we can perform interface with various operations intuitively.

In the previous researches, only one kind of information of hand shapes or hand motion is used. However, quantity of hand shapes and complex hand motion become necessary to realize lots of operations.

In our research, we combine simple hand shapes and hand motion to obtain various hand gestures instead of using only one kind of information. With these gestures, we can perform interface with various operations intuitively.

International Conferences (Peer-reviewed)

- Atsushi Shimada, Takayoshi Yamashita, Rin-ichiro Taniguchi

HOWTO SELECT USEFUL HAND SHAPES FOR HAND GESTURE RECOGNITION SYSTEM

International Conference on Pattern Recognition Applications and Methods (ICPRAM), pp.394-399, 2012.02

BibTeX - Atsushi Shimada, Takayoshi Yamashita, Rin-ichiro Taniguchi

User-Customizable Hand Gesture Interface for Controlling TV

the 18th Japan-Korea Joint Workshop on Frontiers of Computer Vision, pp.129-132, 2012.02

BibTeX