Tutorial of OperationCount¶

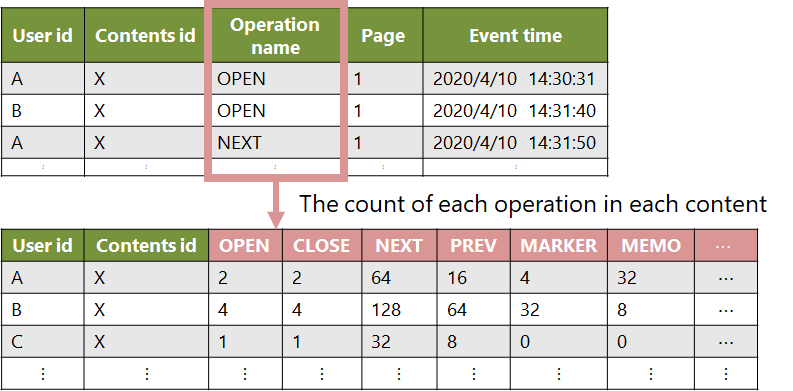

OperationCount class has the aggregated log as the member variable and has the method functions to get the information.

import openLA as la

course_info = la.CourseInformation(files_dir="dataset_sample", course_id="A")

event_stream = course_info.load_eventstream()

operation_count = la.convert_into_operation_count(event_stream,

user_id=None, # all user

contents_id=None, # all contents

operation_name=None # all operation

)

The method functions in below table are available now.

You can get the information of the log like

operation_count.num_users().The documentation is in Operation Count Document

function |

description |

|---|---|

num_users |

Get the number of users in the log |

user_id |

Get the unique user ids in the log |

contents_id |

Get the unique contents ids in the log |

operation_name |

Get the unique operation name in the log |

operation_count |

Get the count of each (or specified) operation in the log |

If you want to process other than the above functions, you can get DataFrame type event stream by operation_count.df and process with Pandas library.

import OpenLA as la

import pandas as pd

operations = ["NEXT", "PREV", "ADD MARKER"]

course_info, event_stream = la.start_analysis(files_dir="dataset_sample", course_id="A")

operation_count = la.convert_into_operation_count(event_stream,

operation_name=operations)

operation_count_df = operation_count.df

print(operation_count_df)

"""

userid contentsid NEXT PREV ADD MARKER

0 U1 C1 299.0 136.0 2.0

1 U1 C2 182.0 143.0 0.0

2 U1 C3 133.0 39.0 0.0

... ... ... ... ... ...

"""

# normalize the count of operations

def normalize(col):

return (col - min(col)) / (max(col) - min(col))

operation_count_df.loc[:, operations] = operation_count_df.loc[:, operations].apply(normalize, axis=0)

print(operation_count_df)

"""

userid contentsid NEXT PREV ADD MARKER

0 U1 C1 0.404054 0.303571 0.007435

1 U1 C2 0.245946 0.319196 0.000000

2 U1 C3 0.179730 0.087054 0.000000

... ... ... ... ... ...

"""